Fourth-Year Project — Embodied conversational agents in augmented reality

October 2000 to May 2001

My fourth-year project was to research and develop an Augmented Reality system for instructional use. My supervisor for this project was Dr Patrick Olivier.

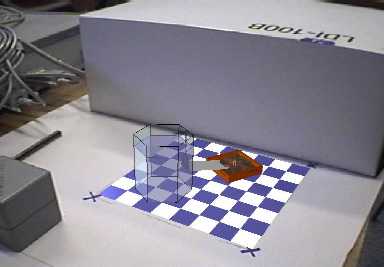

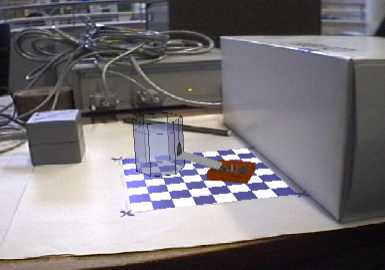

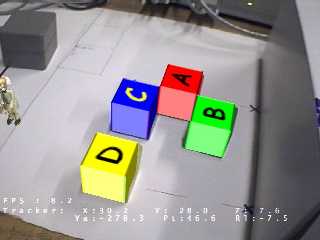

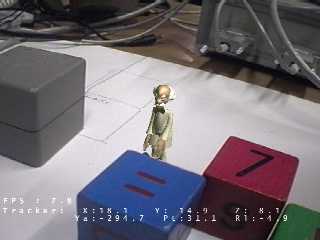

Augmented Reality allows a user to have their view of the world augmented with additional information. For example, someone wishing to repair a photocopier could have their view of the photocopier enhanced with information such as which parts to inspect, or which panel to remove. In particular, I was interested in adding a virtual assistant into the user's world help them with their tasks. As an example, the user should be able to see the virtual assistant as it walks around the augmented world, communicate with speech, and have the assistant understand and reply (with both speech and gesture).

Abstract: Passive methods of instruction, such as a books and videos, are commonplace, but are unable to match the flexibility and expressiveness of human instructors. Spoken natural language is an important part of our communication with each other, yet remains largely unused in our interaction with technology. Embodied conversational agents are animated computer software characters able to converse with users through the understanding and generation of speech, and they often employ gesture to further enhance their expressive powers. Such characters may be used for instructional purposes, combining the benefits of faultless, static reference with the empathic nature of human mentors. Augmented reality, the addition of virtual elements to a view of the real world, represents one of the many challenging and diverse fields of research within Computer Science. A system is sought, which combines the ability of augmented reality to add information to a view of the real world with the versatile nature of embodied conversational agents. The project successfully introduces, reviews, designs, implements, and evaluates an embodied agent-based augmented reality system. The system places particular emphasis on the facial animation and lip-synch of the character by researching and implementing a muscle-based animation system.